Supervised Autonomous Decision Architecture for Fixed-Wing UAV

A first-principles safety-critical architecture separating mission reasoning from real-time flight control. Features a deterministic validation layer, independent watchdog monitoring, and graceful degradation to safe flight modes.

Natural language mission control meets safety-critical flight architecture

9 supervised processes | 4 independent safety layers | Ongoing (January 2026)

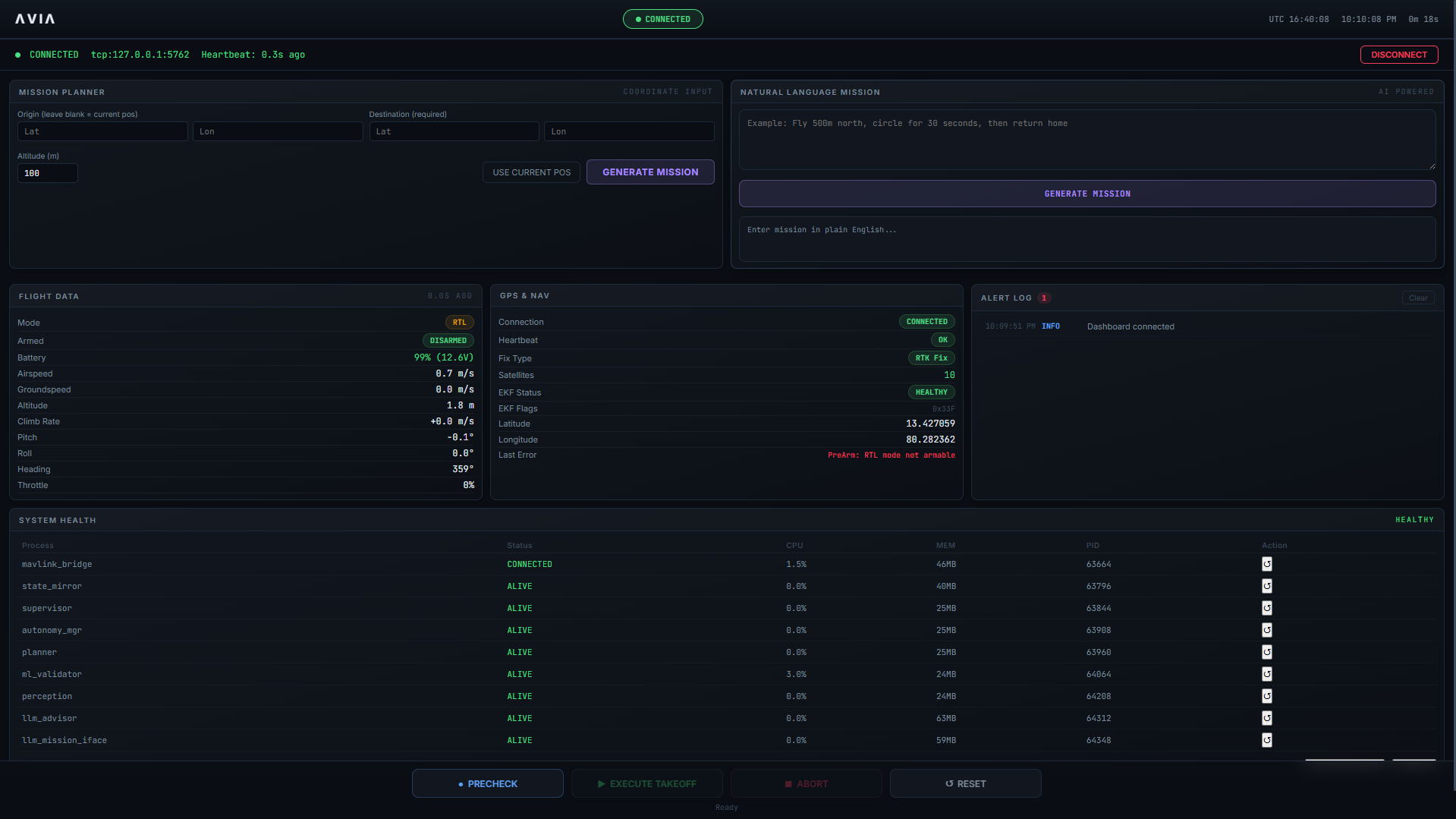

Figure 1. Mission Control Dashboard: Real-time telemetry, autonomy state machine, and natural language mission interface.

01. What This Is

I'm building an autonomous flight system where you can tell a UAV what to do in plain English, and it figures out how to do it safely. Type "fly 500 meters north, circle for photos, then come back"—the system generates a flight plan, validates it against safety constraints, asks for confirmation, then executes the mission autonomously.

The interesting part isn't just making an aircraft fly by itself. It's solving the control authority problem: how do you let a language model generate mission plans without breaking the deterministic safety guarantees that keep the aircraft flying?

02. The Core Challenge

Autonomous flight is fundamentally about who decides what, and who can override whom. When a human pilot flies, they're doing two things simultaneously: strategic decisions ("Should I land now, or circle for better wind?") and precise control (micro-adjustments to keep the aircraft stable).

The hard part is replicating this separation in software while adding a third layer—a language model that can interpret intent but might hallucinate coordinates or ignore safety limits.

03. Natural Language Interface

Type missions in plain English, LLM translates to waypoints, validator ensures safety.

User: "Fly to GPS -35.358, 149.165, take aerial photos, then return home"

LLM: Generates 5-waypoint mission (takeoff → destination → loiter 30s → return → land)

Validator: Checks geofence (✓), altitude limits (✓), battery (✓), total distance (✓)

Dashboard: Shows mission summary, estimated time 6 min, battery 99% → 82%

User: Clicks "CONFIRM"

System: Uploads waypoints → Arms → Takeoff → Executes mission → Lands

How it works:

- Hybrid LLM system: Claude API (primary) + local Llama 3.2 (offline backup).

- Deterministic validator: Checks every waypoint (never trust AI blindly) against geofence limits, 30m-150m altitude boundaries, and 20% battery reserve requirements. Rejects hallucinations (like 0,0 coordinates).

- Structure constraints: Enforces TAKEOFF first, LAND last.

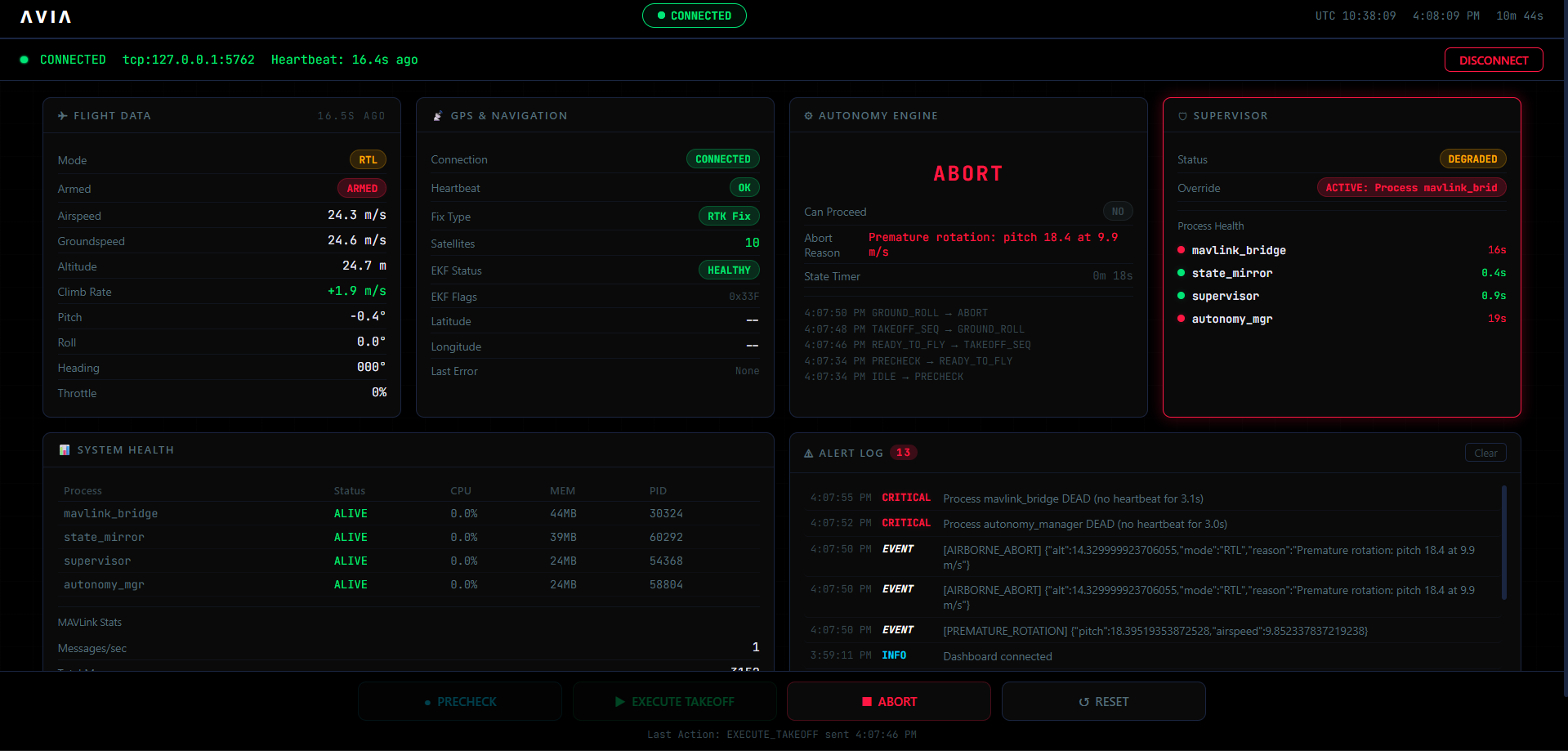

Figure 2. Simulation Environment showing ArduPlane SITL

04. Core Autonomous Capabilities

Working Now

- Autonomous takeoff with over-rotation abort

- GPS waypoint navigation (follows uploaded missions)

- Abort-to-RTL recovery (aircraft lands safely, all processes stay alive)

- Process supervision (any component crash → auto-restart within 5 seconds, infinite retry)

- Real-time web dashboard (telemetry & autonomy state)

In Active Development

- Full mission state machine (takeoff → cruise → approach → final → landing)

- Sensor redundancy (GPS fails → dead reckoning; airspeed fails → GPS margin)

- Dynamic mission replanning mid-flight

- MAVLink connection stability (auto-reconnect)

05. Architecture: Clear Boundaries

The system runs across two physically separate computers with strictly defined roles:

Tier 1: Flight Controller (Pixhawk)

Job: Keep the aircraft flying. Period.

- 100% authority over servos—no exceptions, even if Jetson dies.

- Executes hard-coded failsafes (low battery → RTL, GPS loss → loiter).

Tier 2: Mission Computer (Jetson)

Job: Understand intent, plan routes, validate safety, monitor health.

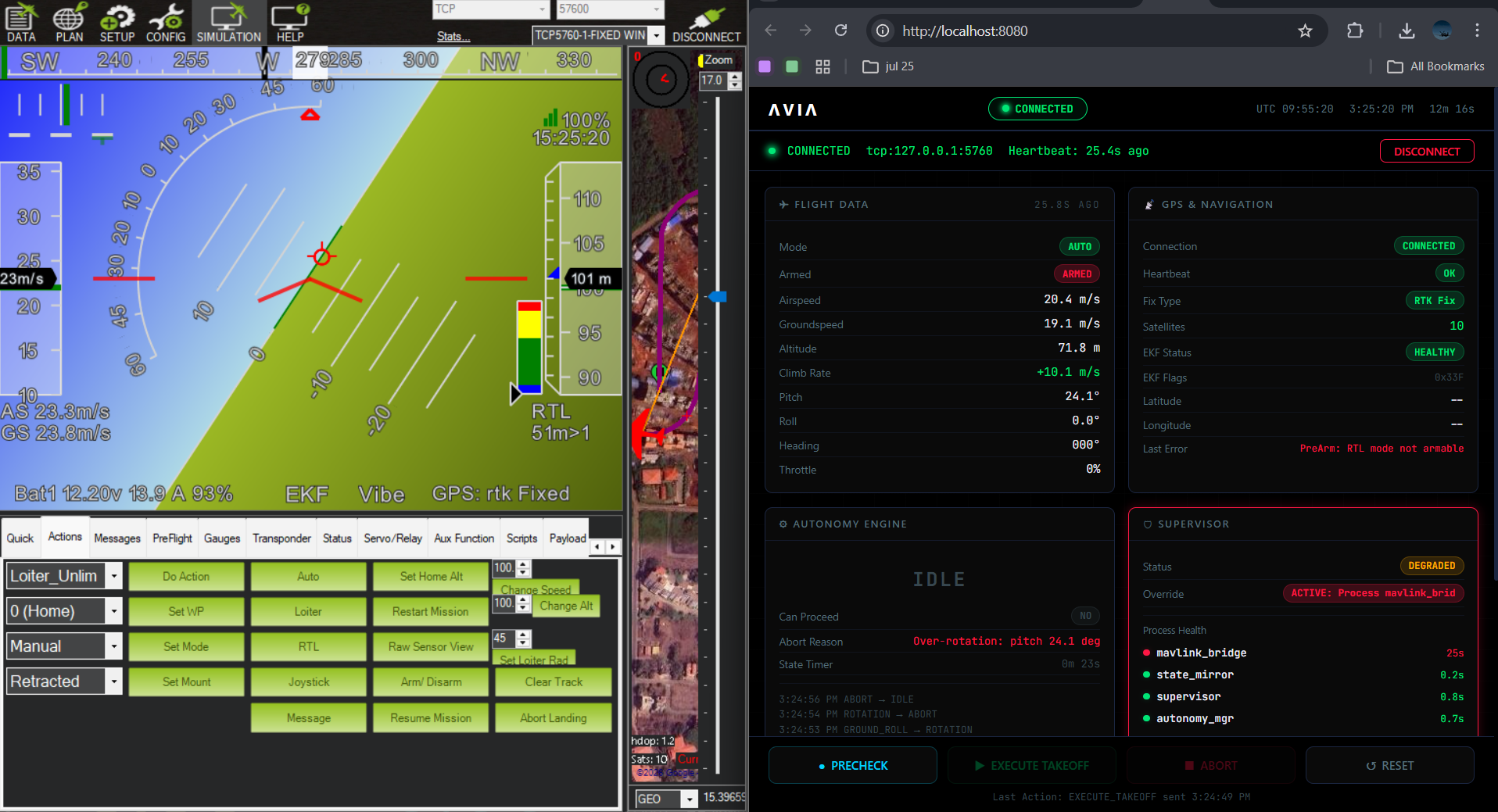

Nine independent Python processes:

- MAVLink Bridge — Serial/UDP communication.

- State Mirror — Aggregates aircraft state.

- Supervisor — Watchdog monitoring all processes.

- Autonomy Manager — State machine executing missions.

- Mission Validator — Deterministic checks on all LLM outputs.

- LLM Mission Interface — Natural language parsing (Claude + Llama).

- Path Planner — Geometric waypoint generation.

- ML Validator — Validates perception outputs.

- Process Manager — Orchestrates and handles crashes.

Figure 3. Error Reporting & Process Health: Demonstrating auto-restart capability.

06. Graceful Degradation

If anything fails at any level, the system drops to the next safest state. Degraded safety is always preferred over unstable autonomy. You can't save every mission—priority is to not crash, then to not lose the aircraft.

Core Guarantees

07. Engineering Metrics

- Codebase ~4,000 lines

- Managed Processes 9 independent

- Safety Layers 4 tiers

- Process Restart < 5 seconds

- Cloud Latency 2-5s (Claude)

- Local Latency ~300ms (Llama)

08. The Takeaway

This project represents a fundamental approach to complex systems: safety first, clear boundaries, deterministic validation of non-deterministic AI, and relentless focus on what actually matters—keeping the aircraft flying.